Can an AI Understand Consciousness Better Than We Can?

A recent paper in Nature Neuroscience suggests it might — and that's both thrilling and humbling

In April, I read Michael Pollan’s new book, A World Appears, and I’ve been sitting with what I call a bit of scientific discomfort ever since. Pollan frames the central problem of consciousness research with a familiar image: can you ever read the label from inside the bottle? We are conscious beings trying to study consciousness using the very apparatus we’re trying to understand. Just re-reading that sentence alone can give me a mild headache. [If you don’t have time to read the book right now, I encourage you to check out Ezra Klein’s interview of Pollan.]

Every neuroscientist and philosopher who has wrestled with the hard problem of consciousness (i.e. why does subjective experience exist at all?) is working from inside the bottle. Pollan’s book was fantastic at opening my aperture to the theories of consciousness and also to the possibility that consciousness may be the one scientific problem where being human is more liability than asset.

The timing of a paper published last month in Nature Neuroscience was a fortunate coincidence and has me thinking about how AI might be able to get around some of the challenges. Since I do not (yet) believe that AI is conscious, perhaps we can get a look at ourselves, our label, from "outside the bottle”.

What they did

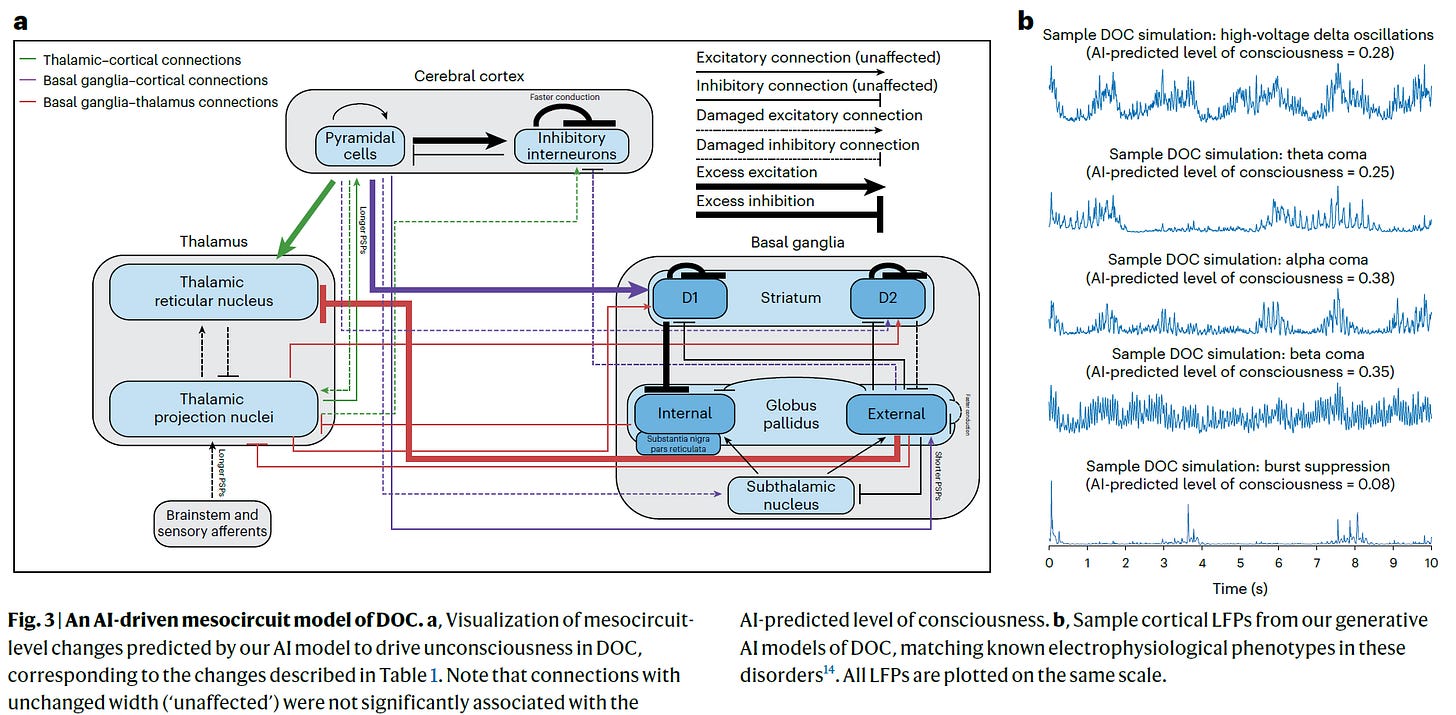

Daniel Toker and colleagues at UCLA built an adversarial AI framework — two models pitted against each other in a kind of game. One learned to detect levels of consciousness from EEG and electrophysiological brain recordings. The other generated biologically realistic simulations of conscious and unconscious brains. The two were then set against each other: one generated simulated unconscious brain activity, the other tried to distinguish those simulations from real conscious brains. Each round, both got better — and what emerged from that competition, without either model being told what to look for, were testable predictions about what actually goes wrong in coma, vegetative states, and minimally conscious states. I think this kind of hypothesis generation is a great use of AI in science right now.

The training set was interesting: over 680,000 ten-second neuroelectrophysiology samples from humans, monkeys, rats, and bats. The AI wasn’t given a theory of consciousness to test. It just learned the patterns — from the outside.

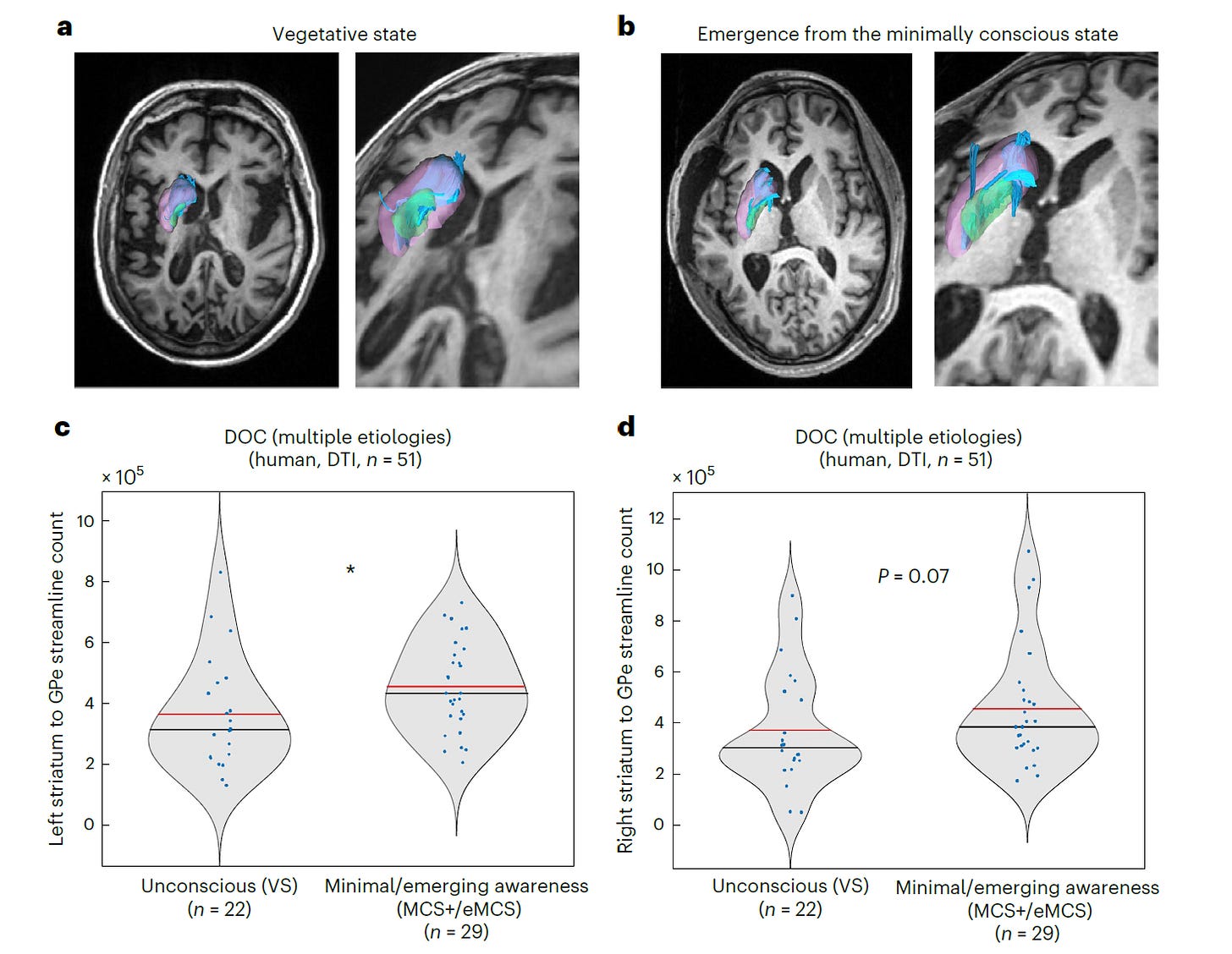

Without being programmed to, the model identified two specific circuit disruptions it predicted would drive unconsciousness: selective damage to the basal ganglia indirect pathway, and abnormal inhibitory wiring in the cortex. Both were then validated: a basal ganglia finding in diffusion MRI from 51 patients with disorders of consciousness, and cortical wiring changes in RNA sequencing of resected brain tissue from 6 human patients with coma. The model also flagged subthalamic nucleus stimulation as a potential therapy — and provided preliminary EEG support from 6 patients with cervical dystonia, though these were conscious patients, not DOC patients. The clinical leap remains large.

Before you think we’re about to solve the hard problem of consciousness…. important caveats

I find this work really interesting, but a few things give me pause — not so much as critiques but more so about where the science is currently. For example, the MRI validation cohort is 51 patients which is meaningful, but small for claims of this magnitude. The RNA sequencing validation is even more limited: 6 patients, with unmatched controls, and the cortical finding hasn’t yet been confirmed histologically. The basal ganglia result also isn’t entirely novel; the role of subcortical circuits in consciousness has been suspected for years, so the AI may be partly rediscovering known biology. And the distance between a circuit-level hypothesis and a bedside treatment is long. Very long, unfortunately.

There’s also the deeper question Pollan’s book kept raising: the AI learned correlates of consciousness, i.e. patterns that distinguish conscious from comatose brains, but that’s not the same as understanding consciousness. The hard problem remains untouched. What the model found is perhaps a map of what breaks. Why there’s something it feels like to be conscious in the first place is a question no dataset, however large, has yet answered.

Why it still matters

All of that said, an approach that doesn’t try to introspect on consciousness but instead learns its neural fingerprints from the outside is new (though I’m sure readers might point me to earlier work!). The bottle has an outside. Whether this particular paper has found the right way to read it, or just one promising way among many, remains to be seen.

What’s your reaction to AI being used to study consciousness? I’d love to hear in the comments.

It's becoming clear that with all the brain and consciousness theories out there, the proof will be in the pudding. By this I mean, can any particular theory be used to create a human adult level conscious machine. My bet is on the late Gerald Edelman's Extended Theory of Neuronal Group Selection. The lead group in robotics based on this theory is the Neurorobotics Lab at UC at Irvine. Dr. Edelman distinguished between primary consciousness, which came first in evolution, and that humans share with other conscious animals, and higher order consciousness, which came to only humans with the acquisition of language. A machine with only primary consciousness will probably have to come first.

What I find special about the TNGS is the Darwin series of automata created at the Neurosciences Institute by Dr. Edelman and his colleagues in the 1990's and 2000's. These machines perform in the real world, not in a restricted simulated world, and display convincing physical behavior indicative of higher psychological functions necessary for consciousness, such as perceptual categorization, memory, and learning. They are based on realistic models of the parts of the biological brain that the theory claims subserve these functions. The extended TNGS allows for the emergence of consciousness based only on further evolutionary development of the brain areas responsible for these functions, in a parsimonious way. No other research I've encountered is anywhere near as convincing.

I post because on almost every video and article about the brain and consciousness that I encounter, the attitude seems to be that we still know next to nothing about how the brain and consciousness work; that there's lots of data but no unifying theory. I believe the extended TNGS is that theory. My motivation is to keep that theory in front of the public. And obviously, I consider it the route to a truly conscious machine, primary and higher-order.

My advice to people who want to create a conscious machine is to seriously ground themselves in the extended TNGS and the Darwin automata first, and proceed from there, by applying to Jeff Krichmar's lab at UC Irvine, possibly. Dr. Edelman's roadmap to a conscious machine is at https://arxiv.org/abs/2105.10461, and here is a video of Jeff Krichmar talking about some of the Darwin automata, https://www.youtube.com/watch?v=J7Uh9phc1Ow